|

8/28/2023 0 Comments Kafka connect prometheus exporter

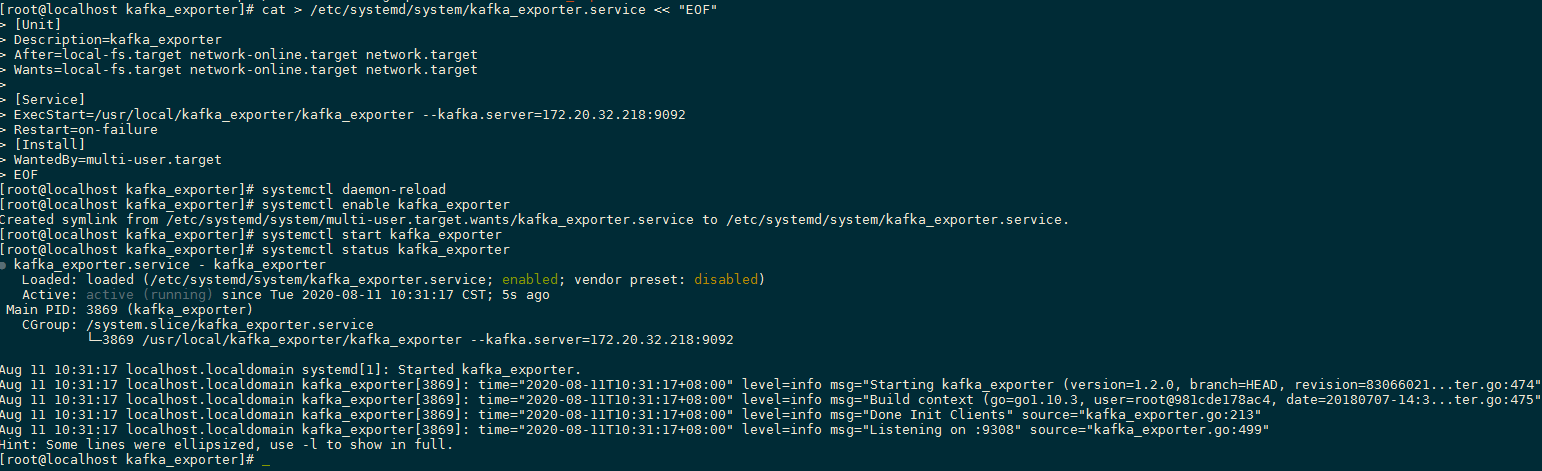

The number of files purged from the internal stage after the ingestion status was deteremined. The number of files present on the table stage that corresponds to a broken offset. Prometheus Exporters are used to extract and export data metrics to your Prometheus instance. The number of files on the table stage that failed ingestion. Apache Kafka is developed using Java and therefore we are going to need a Java exporter to scrape (extract) metrics so that Prometheus can be able to consume, store and expose. The value of this property is 0 if there are no more files to be ingested. The number of calls to the insertFiles REST API can be larger than this value. There is not a one to one relation between the number of files and the number of REST API calls. There is currently a limitation of 5k for files that are being sent via a single REST API request. The number of files in Snowpipe determined by calling the insertFiles REST API. This property provides an estimate of how many files are currently on an internal stage. This article will provide a step-by-step tutorial about deploying Kafka Connect on Kubernetes. This value is decremented after the process of purging the files has started. Apr 25 - Photo by Ryoji Iwata on Unsplash Strimzi is almost the richest Kubernetes Kafka operator, which you can utilize to deploy Apache Kafka or its other components like Kafka Connect, Kafka Mirror, etc.In this post, I’ll use Kafka as an example of a Java application that you want to monitor. with exporting metrics from third-party systems as Prometheus metrics. The exporter connects to Java’s native metric collection system, Java Management Extensions (JMX), and converts the metrics into a format that Prometheus can understand. The number of files currently on an internal stage. Grafana can connect to different data sources and databases and allows you to. The following sections list the names of the categories and metrics provided by the Snowflake Kafka connector. Name= metric_name specifies the name of the metric. The Kafka connector defines Snowpipe objects for each partition.Ĭategory= category_name specifies the category of the MBean. Pipe= pipe_name specifies the Snowpipe object used to ingest data. :connector= connector_name ,pipe= pipe_name ,category= category_name ,name= metric_nameĬonnector= connector_name specifies the name of the connector defined in the Kafka configuration file. The general format of the Kafka Connector MBean object name is:

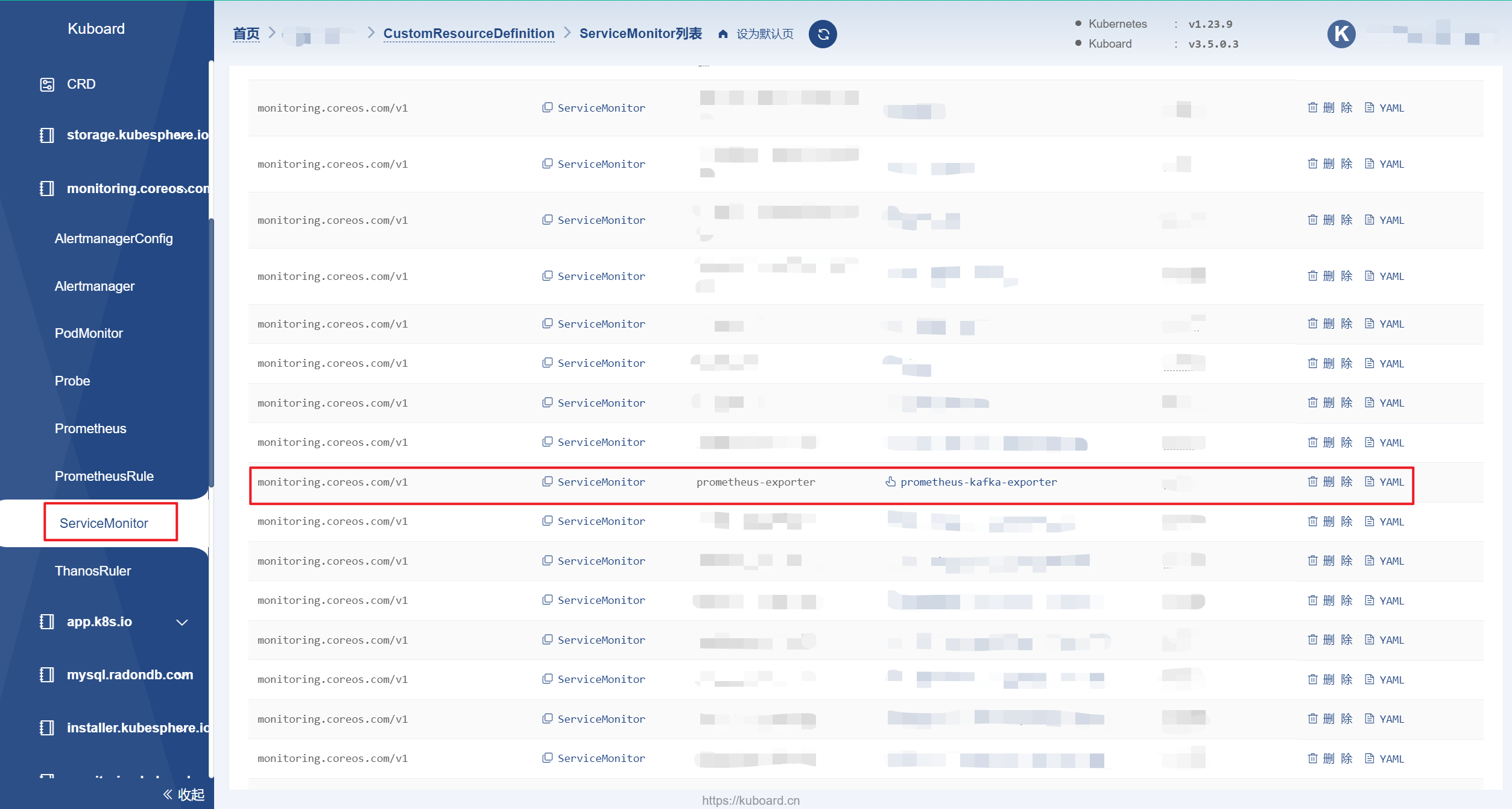

You can use these MBeans to create monitoring dashboards. The Snowflake Kafka connector provides MBeans for accessing objects managed by the connector. When you have Prometheus and Grafana enabled, Kafka Exporter provides additional monitoring related to consumer lag. JMX uses MBeans to represent objects within Kafka that it can monitor (e.g. Using the Snowflake Kafka Connector Managed Beans (MBeans) ¶

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed